Mental health chatbots provide instant emotional support and guidance using AI technology. They improve accessibility and awareness but have limitations like privacy concerns and emotional depth. They should complement, not replace, professional mental healthcare services.

Mental health chatbots are AI-powered tools that provide instant emotional support and guidance through conversation-based interaction. They help users manage stress, anxiety, and other emotional challenges in a simple and accessible way. By using advanced AI technology, mental health chatbots improve access to mental wellness support for many people. However, mental health chatbots should always be used alongside professional mental healthcare services for safe, balanced, and effective emotional well-being support.

Understanding Mental Health Chatbots

Mental health chatbots are AI-driven tools designed to provide emotional support through conversation. These systems use natural language processing to understand user input and respond in a supportive and human-like manner. They are commonly used for stress relief, mood tracking, and basic counseling assistance. By analyzing user emotions, these systems can deliver more personalized responses that improve user experience. The rise of digital mental support tools has increased accessibility for people who cannot easily reach therapists. Developers are also enhancing them using emotionally intelligent chatbot technology to make interactions more empathetic. However, they still rely on programmed algorithms, which limit their ability to fully replace professional therapy.

Key Benefits of Mental Health Chatbots

These tools offer several important benefits, including 24/7 availability and instant support for users facing emotional distress. They reduce barriers such as cost and accessibility, making mental wellness support more inclusive for everyone. Users can track mood patterns and receive coping strategies based on their conversations. They are also helpful in the early detection of anxiety or depression symptoms. Another major advantage is that they provide a judgment-free environment, encouraging users to express emotions freely. With advanced chatbot performance analytics, developers can continuously improve system responses. Overall, they play a supportive role in enhancing emotional awareness and offering immediate guidance when human therapists are not available.

How Mental Health Chatbots Work

Ethical issues surrounding mental health chatbots are becoming increasingly important in digital healthcare. These systems must ensure strong user privacy protection and secure data handling at all times. Transparency about how data is stored, processed, and used is essential for building trust among users. Some individuals may develop emotional attachments to these systems, raising concerns about psychological dependency. Developers must ensure that emotionally intelligent chatbot technologies do not manipulate or influence user emotions in harmful ways. The underlying AI chatbot algorithms should follow strict ethical boundaries to avoid misleading responses. These tools must clearly inform users that they are not human therapists. Ethical guidelines ensure safe, responsible, and transparent use in mental health support systems.

Role of AI in Mental Health Chatbots

AI plays a crucial role in shaping the effectiveness of mental health chatbots. These systems rely on machine learning models that continuously improve through data exposure and user interactions. They use artificial intelligence to understand emotional cues, language patterns, and user behavior more accurately. The underlying chatbot algorithms work behind the scenes to predict suitable responses and maintain smooth conversational flow. Sentiment analysis is also used to detect emotional distress and provide supportive replies. With advancements in emotionally intelligent chatbot technology, these systems are becoming more empathetic and responsive. However, they still face limitations in understanding deep psychological issues. Despite this, AI continues to enhance performance in digital mental healthcare environments, making these tools more useful and adaptive over time.

Risks and Limitations of Mental Health Chatbots

Although mental health chatbots provide many benefits, they also come with risks and limitations. Mental health chatbots may misinterpret emotional context, leading to inaccurate responses. Over-reliance on mental health chatbots can reduce human interaction, which is essential for emotional healing. Privacy concerns are also significant, as mental health chatbots handle sensitive personal data. While Chatbot Performance Analytics help improve systems, they cannot eliminate all risks. Mental health chatbots are not capable of diagnosing serious mental health conditions accurately. Additionally, AI Chatbot Algorithms Work based on data patterns, not real human empathy. Therefore, mental health chatbots should be used as supportive tools, not replacements for professional psychological care or therapy.

Ethical Concerns in Mental Health Chatbots

Ethical issues surrounding mental health chatbots are becoming increasingly important. Mental health chatbots must ensure user privacy and secure data handling at all times. Transparency about how mental health chatbots store and process data is essential for building trust. Some users may form emotional attachments to mental health chatbots, raising concerns about dependency. Developers must ensure that Emotionally Intelligent Chatbots do not manipulate user emotions. The AI Chatbot Algorithms Work should be designed with ethical boundaries to avoid misleading responses. Mental health chatbots must also clearly inform users that they are not human therapists. Ethical guidelines help ensure that mental health chatbots remain safe, responsible, and supportive tools in mental healthcare.

Mental Health Chatbots in Real-World Applications

These AI-powered support tools are now widely used across digital health platforms, workplaces, and educational systems to improve emotional well-being. They help users manage stress, anxiety, and burnout by offering instant conversational support whenever needed. Many organizations integrate them into employee wellness programs to enhance productivity, focus, and mental resilience. In education, students rely on these tools for managing exam pressure and maintaining emotional balance. They are also commonly included in mobile applications for daily mood tracking and self-care guidance. While they cannot replace professional therapists, they offer scalable and accessible mental health support globally, making emotional assistance more available to a wider population.

Key Points

- Used in workplaces for employee wellness

- Helpful for students managing exam stress

- Integrated into mobile mental health apps

- Provide instant emotional support

- Support early stress detection

| Sector |

Use Case |

Benefit |

| Workplace |

Employee mental wellness support |

Reduced stress and burnout |

| Education |

Student stress management |

Better focus and emotional balance |

| Healthcare apps |

Mood tracking & support |

Improved self-awareness |

| Personal use |

Daily emotional check-ins |

Instant comfort and guidance |

Best Practices for Using Mental Health Chatbots

To use mental health chatbots effectively, users should clearly understand their limitations. These tools are best suited for emotional support and guidance, not medical diagnosis or crisis intervention. It is always recommended to combine them with professional therapy when necessary. Developers should continuously improve chatbot performance analytics to enhance response accuracy and user experience. Strong privacy and data security measures are essential to protect sensitive user information. Users should avoid becoming overly dependent on these tools for emotional stability. The underlying AI systems should be regularly updated to reduce errors and improve reliability. Emotionally intelligent chatbot models should also be tested for ethical compliance to ensure safe and responsible use.

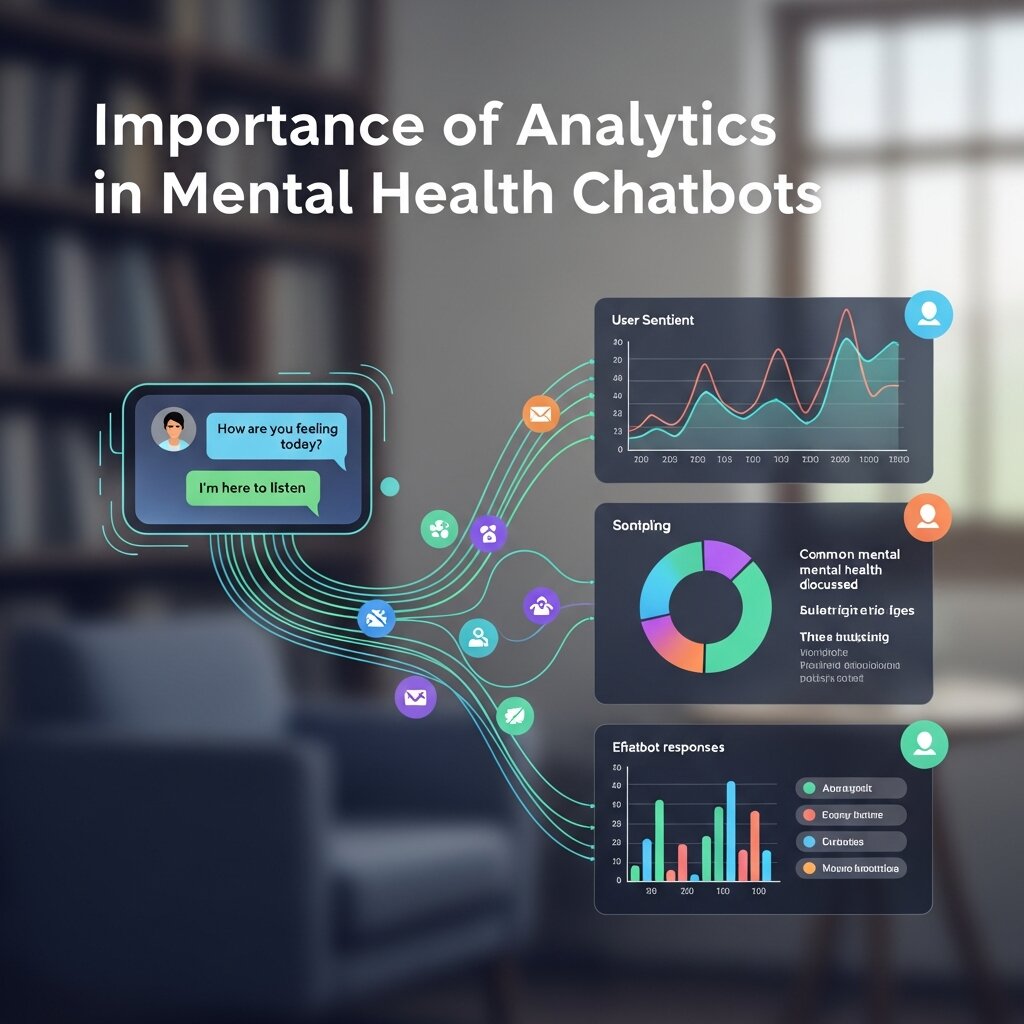

Importance of Analytics in Mental Health Chatbots

Chatbot Performance data Analytics play a vital role in improving digital mental health systems by helping developers understand user behavior, emotional trends, and system accuracy. These insights allow the platform to refine its responses and improve the quality of engagement over time. By analyzing interaction data, these systems become more adaptive and effective in real-world situations. The underlying AI chatbot algorithms are continuously improved through feedback loops to enhance performance and response relevance. Emotionally intelligent models also contribute by improving emotional accuracy and user satisfaction. Overall, analytics ensure that these tools remain efficient, user-friendly, and capable of evolving with changing user needs in modern digital mental health support.

Challenges in Developing Mental Health Chatbots

Developing mental health chatbots is complex due to the need for emotional accuracy, data privacy, and ethical responsibility. While AI technology has improved significantly, understanding deep human emotions remains a major challenge. Developers must ensure that mental health chatbots provide safe, accurate, and non-harmful responses. Another challenge is balancing automation with human-like empathy. Additionally, data security is critical because users share sensitive emotional information. Despite these challenges, continuous improvements in AI Chatbot Algorithms Work and Emotionally Intelligent Chatbots are helping enhance performance and reliability.

Key Points

- Difficulty in understanding deep emotions

- Privacy and data security concerns

- Need for ethical AI design

- Balancing automation and empathy

- Limited diagnostic ability

| Challenge Area |

Description |

Impact on Users |

| Emotional accuracy |

Misinterpretation of feelings |

Incorrect responses |

| Data privacy |

Sensitive user data handling |

Trust concerns |

| Ethical concerns |

Risk of emotional dependency |

User over-reliance |

| AI limitations |

Lack of human-like judgment |

Reduced effectiveness |

Future of Mental Health Chatbots

The future of mental health chatbots looks very promising as artificial intelligence technology continues to evolve rapidly. These systems are expected to become more personalized, adaptive, and emotionally aware. With advancements in emotionally intelligent chatbot systems, future tools will better recognize and naturally respond to human emotions. They may also integrate with wearable devices to monitor stress levels and mental well-being in real time. Improved AI chatbot algorithms will enable deeper conversations and more accurate responses. These tools will play a stronger role in preventive mental healthcare, although human supervision will remain essential to ensure safety and reliability in sensitive situations.

Conclusion

In conclusion, mental health chatbots are transforming how people access emotional and psychological support in today’s digital world. They provide instant, scalable, and convenient mental wellness assistance to users across different backgrounds. While they offer valuable guidance and accessibility, they also come with limitations such as privacy concerns and reduced emotional depth. Understanding how these systems function helps users make informed decisions. With continuous improvements in emotionally intelligent chatbot technology and advanced AI systems, their future remains bright. However, they should always act as supportive tools that complement, not replace, professional mental healthcare services.

Frequently Asked Questions

1: What are mental health chatbots?

Mental health chatbots are AI-powered automation tools designed to provide emotional support through conversation. They help users manage stress, anxiety, and mood-related issues by offering guidance, coping strategies, and supportive responses. This makes mental wellness support more accessible, immediate, and available anytime for users who need basic emotional assistance.

2: How do mental health chatbots work?

Mental health chatbot use AI, natural language processing, and machine learning to understand user messages. They analyze emotional tone, intent, and context, then respond with supportive replies. These systems improve over time through data learning, making conversations more natural, personalized, and helpful for emotional well-being support.

3: Are mental health chatbots effective?

Mental health chatbots can be effective for basic emotional support, stress relief, and self-awareness. However, they are not a replacement for professional therapy. They work best as supportive tools that help users manage daily emotional challenges, while serious mental health conditions still require human professionals.

4: Can mental health chatbots replace therapists?

No, mental health chatbots cannot replace human therapists. They lack clinical training and deep emotional understanding. Instead, they provide supportive conversations, coping suggestions, and guidance, serving as a first step toward professional mental healthcare when needed.

5: Are mental health chatbots safe to use?

Mental health chatbot are generally safe when used responsibly. However, users should avoid sharing highly sensitive personal data. Strong privacy policies and secure systems are important, but professional help should always be prioritized for serious mental health concerns.

6: What are the benefits of mental health chatbots?

Mental health chatbots offer 24/7 availability, instant responses, and affordable emotional support. They help users manage stress, track moods, and practice self-care techniques. These advantages make mental health support more accessible, especially for individuals with limited access to therapists or counseling services.

7: What are the risks of mental health chatbots?

Risks include inaccurate responses, emotional dependency, and privacy concerns. They may misunderstand complex emotions and cannot provide a proper diagnosis. Users should avoid relying on them alone for serious psychological issues or crisis situations.

8: Do mental health chatbots use real AI?

Yes, mental health chatbots use real AI technologies such as machine learning and natural language processing. These systems analyze user input, detect emotions, and generate responses. However, they still operate within programmed limits and do not possess true human empathy.

9: Can mental health chatbots understand emotions?

Mental health chatbots can recognize basic emotional patterns using sentiment analysis. However, they do not fully understand human emotions like trained therapists. Their responses are based on data patterns, making them helpful but limited in emotional depth.

10: What is the future of mental health chatbots?

The future looks promising with advancements in AI, personalization, and emotional intelligence. These tools may integrate with wearable devices and health apps for better real-time support. However, human therapists will remain essential for complex mental healthcare needs and deeper emotional care.

Event Management Chatbots: Streamline Attendee Engagement

Ethical AI Chatbots: Ensuring Fairness & Transparency

Mental Health Chatbots: Benefits, Risks & Best Practices

Boost Chatbot IQ with Retrieval-Augmented Generation (RAG)