AI Chatbots for CRM Integration

Ethical AI Chatbots and Responsible Use

Ethical considerations are important in modern AI systems, and AI chatbot RAG supports more responsible AI usage. By retrieving verified data sources, it reduces the risk of generating misleading or harmful content. This makes chatbot interactions safer and more transparent. Ethical AI Chatbots powered by AI chatbot RAG ensure fairness, accountability, and accuracy in communication. Businesses can implement guidelines to control data sources and maintain ethical standards. AI chatbot RAG also helps avoid bias by relying on diverse and trusted datasets. As AI adoption grows, ethical design becomes essential for building user trust and long-term reliability in digital communication systems.

AI Chatbots for Customer Support Automation

Data Privacy in AI Chatbot Systems

Data privacy is a critical concern in AI development, and AI chatbot RAG addresses it by limiting unnecessary data exposure. Instead of storing all data in the model, it retrieves only relevant information when needed. This reduces risks of data leaks and enhances security. Businesses can control data sources and ensure compliance with privacy regulations. AI chatbot RAG also allows secure handling of sensitive user information in real time. By minimizing stored data dependency, AI chatbot RAG strengthens user trust. It is widely used in industries where confidentiality and security are top priorities, such as healthcare and finance. Chatbots and Data Privacy become much more manageable with this approach.

1: What is AI chatbot RAG?

AI chatbot RAG is a system that combines retrieval and generation to improve chatbot responses. It fetches real-time data from external sources before generating answers, making interactions more accurate, relevant, and reliable for users across different industries and applications.

2: How does AI chatbot RAG work?

AI chatbot RAG works by retrieving relevant information from databases or documents first, then using generative AI to create responses. This ensures answers are grounded in real data, reducing errors and improving chatbot intelligence, accuracy, and overall user experience.

3: Why is the AI chatbot RAG important?

AI chatbot RAG is important because it improves response accuracy, reduces misinformation, and enhances user trust. It allows chatbots to access updated information instantly, making them more effective in customer service, CRM systems, and knowledge-based applications across industries.

4: How does AI chatbot RAG improve CRM systems?

AI chatbot RAG improves CRM systems by providing personalized responses using real-time customer data. It helps businesses manage leads, track interactions, and deliver faster support, improving customer satisfaction and engagement while maintaining efficient and automated communication workflows.

5: Is AI chatbot RAG good for customer support?

Yes, AI chatbot RAG is excellent for customer support. It retrieves accurate troubleshooting information and FAQs before responding. This reduces response time, improves accuracy, and ensures customers receive helpful, consistent, and efficient support experiences across multiple channels.

6: How does AI chatbot RAG support Ethical AI Chatbots?

AI chatbot RAG supports Ethical AI Chatbots by using verified and controlled data sources. This reduces bias, misinformation, and harmful outputs. It promotes transparency, fairness, and responsible AI usage, ensuring safer and more trustworthy chatbot interactions for users.

7: What role does AI chatbot RAG play in data privacy?

AI chatbot RAG improves data privacy by retrieving only necessary information instead of storing large datasets. This minimizes exposure risks and enhances security. It also allows businesses to control data usage and comply with privacy regulations effectively and safely.

8: Can the AI chatbot RAG be used in small businesses?

Yes, AI chatbot RAG can be used in small businesses. It helps automate customer interactions, manage queries, and improve efficiency without requiring large infrastructure. It is scalable, cost-effective, and supports growth by enhancing customer engagement and support services.

9: What industries benefit from the AI chatbot RAG?

Industries like healthcare, finance, e-commerce, education, and customer service benefit greatly from AI chatbot RAG. It improves accuracy, automates support, and enhances data handling, making operations more efficient, secure, and responsive to user needs in real time.

10: What is the future of AI chatbot RAG?

The future of AI chatbot RAG is highly advanced, with better context understanding and real-time learning capabilities. It will become more intelligent, scalable, and widely used in automation, transforming how businesses interact with users and manage digital communication systems.

AI Chatbots in Supply Chain Management: Boost Efficiency

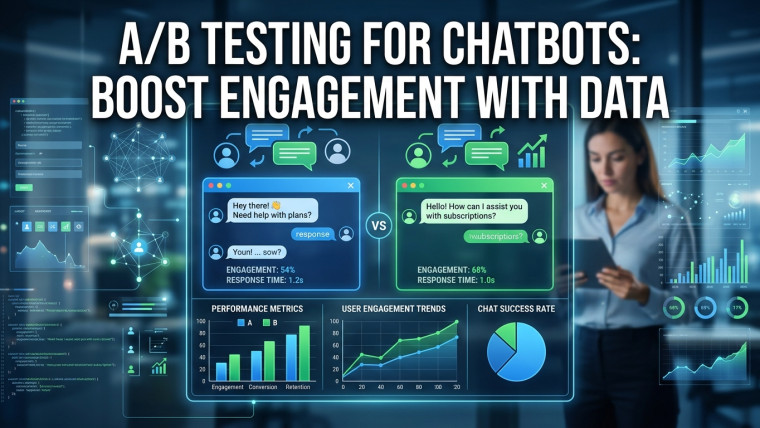

A/B Testing for Chatbots: Boost Engagement with Data

Smart Chatbots Revolutionizing Supply Chain Management

Supply Chain Chatbots: Streamlining Logistics with AI