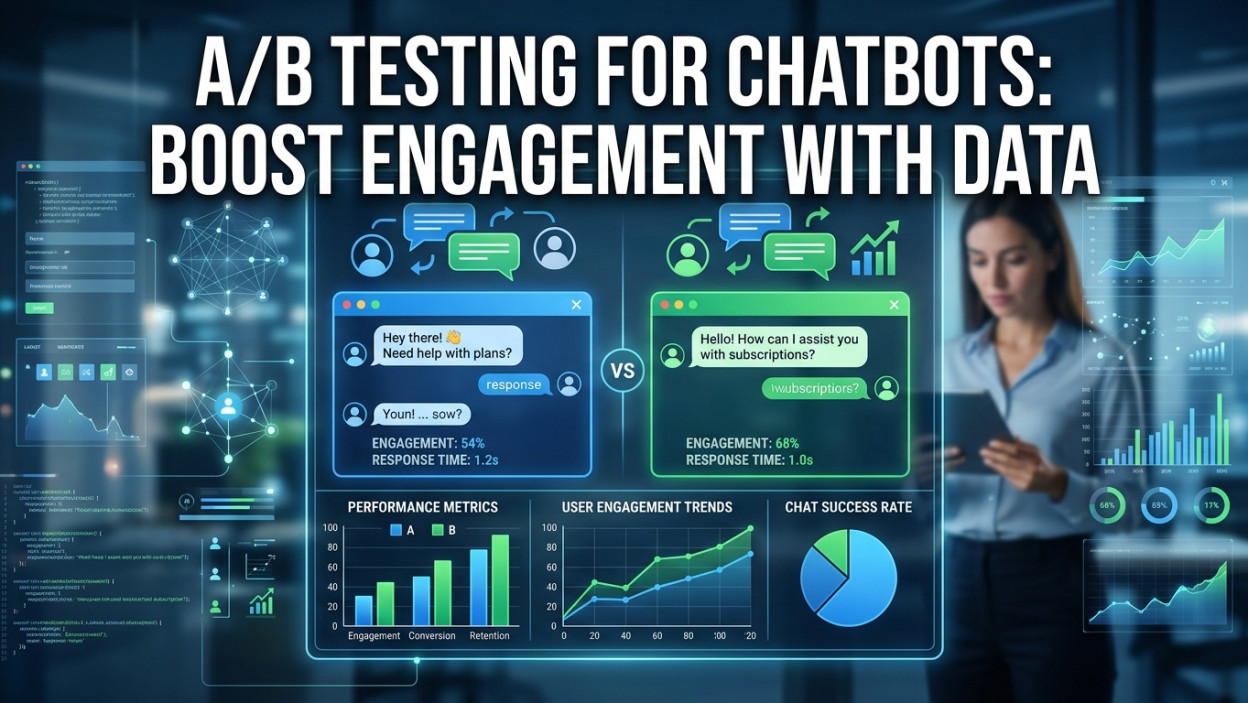

Are you struggling to get users to interact with your automated assistant? A/B testing is the ultimate solution to unlock higher engagement and drive measurable results.

Optimizing your conversational assistant requires more than just guesswork. This comprehensive guide covers everything from establishing clear hypotheses to tracking crucial metrics. By running continuous experiments, you can refine your messaging, improve user satisfaction, and dramatically increase your overall return on investment.

Understanding the Fundamentals of Chatbot Optimization

When you deploy a digital assistant, you are creating a new touchpoint for your audience. However, simply launching it is not enough. You must continuously refine how it interacts with users. A/B testing provides a structured framework for comparing two or more variations of your assistant to determine which one performs better based on specific data points.

By testing different greetings, conversation flows, and response times, you take the guesswork out of the equation. This data-driven approach ensures that every change you make is backed by real user behavior rather than intuition alone. When you integrate conversational AI into your website or application, its success depends entirely on how naturally it guides the user toward a resolution or conversion.

The Science Behind the Data

Running an experiment means you split your incoming traffic into different groups. One group sees the original version, known as the control. The other group sees the variant, which contains the specific change you want to measure. Through careful observation, you can identify which version leads to better outcomes, whether that means more captured leads, higher sales, or resolved support tickets.

Aligning Intent with Outcomes

Every successful experiment begins with understanding search intent and user behavior. Are your users looking for quick informational answers, or are they trying to make a purchase? By aligning your variants with these distinct intents, you can drastically improve your conversion rate optimization. If a user wants to track an order, a long, conversational greeting might frustrate them. Testing a concise, transactional greeting against a friendly, conversational one will reveal exactly what your audience prefers.

Why Your Business Needs to Run Experiments

Boosting Customer Satisfaction

A poor chatbot experience can severely damage your brand reputation. When users encounter a dead end or an unhelpful response, they leave. By testing different fallback responses—the messages sent when the bot does not understand the user—you can reduce frustration. For instance, testing a simple “I don’t understand” against a helpful prompt that offers to connect the user to a human agent can significantly impact your customer retention metrics.

Driving Tangible Revenue

Chatbots are not just for customer support; they are powerful sales tools. If you use your bot to capture leads or promote products, testing different calls-to-action (CTAs) is critical. A slight tweak in the wording of a button or the timing of a promotional message can lead to massive gains in revenue. When you link these experiments to your sales funnel strategies, the financial impact becomes clear and measurable.

Key Metrics to Track for Success

You cannot improve what you do not measure. Before launching any experiment, you must define the key performance indicators (KPIs) that will determine success or failure.

Activation Rate and Fall Back Rate

The activation rate measures how many users engage with your bot after it sends an initial message. If your activation rate is low, you should test different welcome messages. The fallback rate measures how often your bot fails to understand a query. Testing different natural language processing models or adding quick-reply buttons can help lower this rate, ensuring smoother conversations.

Retention Rate and Goal Completion

Retention rate looks at how many users return to interact with your assistant over time. A high retention rate indicates that users find the tool valuable. Goal completion, on the other hand, tracks specific actions, such as booking a meeting or submitting an email address. A/B testing different user flows will show you the exact sequence of messages that yields the highest goal completion rate.

Step-by-Step Guide to Launching Your First Experiment

Creating a successful experiment requires a methodical approach. Follow these steps to ensure your data is accurate and actionable.

Step 1: Craft a Strong Hypothesis

Every test starts with a hypothesis. This is an educated guess about what will happen when you make a change. A good hypothesis is specific and measurable. For example: “Changing the welcome message from ‘Hello’ to ‘How can I save you time today?’ will increase the activation rate by 15%.” This clear statement gives your A/B testing a precise focus.

Step 2: Design the Variants

Once you have your hypothesis, build your variants. Keep things simple by changing only one element at a time. If you change the text, the color of the chat window, and the response delay all at once, you will not know which change caused the difference in performance. Stick to testing a single variable for clear, conclusive data.

Step 3: Determine Sample Size and Duration

To achieve statistical significance, you need enough data. Using an experiment duration calculator helps you understand how long the test should run. Ending a test too early can lead to false positives. Make sure you allow the experiment to run through a full business cycle—usually at least one to two weeks—to account for weekend and weekday traffic variations.

Step 4: Analyze and Iterate

When the test concludes, analyze the results. Did the variant beat the control? If so, implement the winning change. If the test was inconclusive or the control won, use the insights gained to formulate a new hypothesis. Continuous iterative testing is the secret to long-term digital marketing success.

Crucial Elements You Should Be Testing

The Welcome Message

The welcome message is your digital handshake. Test a generic greeting against a highly personalized one. Try using emojis in one variant and a strictly professional tone in another. You can also test the timing—whether the chat window pops up immediately upon page load or after the user has been on the page for 30 seconds.

Button Copy vs. Free Text

Do your users prefer typing out their questions, or do they prefer clicking predefined buttons? Testing an open-ended question (“What can I help you with?”) against a menu of buttons (“Track Order,” “Return Item,” “Speak to Agent”) can drastically change how users interact with your system.

Tone of Voice

Your bot’s personality matters. Test a formal, corporate tone against a casual, witty tone. Depending on your industry and target audience, you might find that users engage much longer with a bot that uses humor and gifs compared to one that is purely transactional.

Comparison Table: A/B Testing vs. Multivariate Testing

|

Feature |

A/B Testing |

Multivariate Testing |

|---|---|---|

|

Concept |

Compares two distinct versions (A and B). |

Compares multiple variables simultaneously. |

|

Traffic Requirement |

Low to medium traffic required for significance. |

High traffic required to test all combinations. |

|

Complexity |

Simple to set up and analyze. |

Complex, requires advanced statistical analysis. |

|

Best Used For |

Testing major design or copy changes. |

Refining multiple small elements on a highly trafficked page. |

Common Mistakes to Avoid

Even seasoned marketers make errors when running experiments. Avoid these common pitfalls to keep your data clean.

- Testing Too Many Variables: Changing the greeting, the avatar, and the button color in one variant makes it impossible to know which element impacted the metric.

- Ending Tests Too Early: Impatience leads to bad data. Always wait for statistical significance before declaring a winner.

- Ignoring Segmented Data: A variant might perform poorly overall but exceptionally well for mobile users. Always segment your data by device, traffic source, and user type.

- Forgetting the Control: You must always run your variant simultaneously alongside the original version to account for external factors like seasonality.

Pro Tips and Expert Insights

To elevate your optimization game, consider these expert strategies.

First, heavily focus on your user onboarding experience. The first three messages dictate whether a user stays or leaves. Make them count.

Second, utilize iterative testing. Do not just stop after one win. If a new welcome message increases engagement by 10%, take that new winning message and test the response delay time. Stacking small wins leads to massive compounding growth over time.

Finally, ensure your external links to authoritative sources, like research papers on conversational logic, are placed naturally to provide deeper context for your team when building these systems.

The Future of Chatbot Optimization

Conclusion

Frequently Asked Questions

1. What is the primary benefit of A/B testing a chatbot?

The primary benefit is removing guesswork. By testing two variations, you rely on actual user data to make decisions, which consistently leads to higher engagement, better lead generation, and improved customer satisfaction.

2. How long should an experiment run?

An A/B testing experiment should run until it reaches statistical significance, which typically takes one to two weeks. Proper A/B testing duration helps account for traffic fluctuations between weekdays and weekends, ensuring your chatbot optimization decisions are accurate and data-driven.

3. Can I test more than two variants at once?

Yes, this is known as A/B/n testing. With A/B testing, you can compare multiple chatbot variations against a control version. However, adding more variants requires significantly higher traffic to ach

4. What is the difference between A/B testing and multivariate testing?

A/B testing compares two or more complete chatbot versions by changing one major element at a time. Multivariate testing analyzes several smaller changes simultaneously to determine the best-performing combination. While multivariate testing offers deeper insights, A/B testing is simpler and requires less traffic.

5. What is a good activation rate for a chatbot?

A strong activation rate for chatbot A/B testing usually falls between 20% and 30%, depending on industry and audience behavior. Continuous A/B testing and optimization can improve engagement, helping more users interact with your chatbot successfully over time.

6. Why did my experiment results come back inconclusive?

Inconclusive A/B testing results often happen when the tested change is too minor to influence user behavior or when the experiment lacks enough traffic and duration. Running your A/B testing experiment longer usually provides more reliable and actionable insights.

7. Should I test the bot’s avatar?

Yes, A/B testing chatbot avatars can significantly impact trust and engagement. Testing a human avatar, animated character, or brand logo helps identify which visual style creates stronger user interaction and improves overall chatbot performance.

8. How do I prevent testing from annoying my users?

To avoid frustrating users during A/B testing, ensure each visitor consistently sees the same chatbot variation throughout the experiment. Switching variants repeatedly can confuse users and negatively affect both engagement metrics and overall user experience.

9. Can A/B testing improve my customer support resolution time?

Absolutely. A/B testing different chatbot conversation flows, routing questions, and automated replies helps identify the fastest and most effective support process. This improves resolution times while reducing the need for human intervention.

AI Chatbots in Supply Chain Management: Boost Efficiency

A/B Testing for Chatbots: Boost Engagement with Data

Smart Chatbots Revolutionizing Supply Chain Management

Supply Chain Chatbots: Streamlining Logistics with AI