Ethical AI chatbots ensure fairness, transparency, privacy, and accessibility in digital communication. They reduce bias, improve user trust, and support responsible AI adoption across industries while delivering accurate, inclusive, and accountable conversational experiences globally.

Understanding Ethical AI Chatbots

Ethical AI chatbots are intelligent systems designed to interact with users while following principles of fairness, transparency, and accountability. Unlike traditional bots, they are built to avoid biased responses and ensure equal treatment for all users. The goal of ethical AI chatbots is not only to provide accurate answers but also to maintain trust and user confidence. These systems rely on carefully curated datasets and continuous monitoring to reduce harmful outputs. Businesses increasingly adopt ethical AI chatbots to align with global AI governance standards. By prioritizing transparency, these chatbots clearly explain how decisions are made, helping users understand responses and build long-term trust in AI-powered communication tools.

Fairness in AI Chatbot Design

Transparency and Explainability

Transparency is essential in building ethical AI chatbots, as users need to understand how responses are generated. These systems provide explainable outputs, showing how decisions are made rather than acting as “black boxes.” Ethical AI chatbots often include logs, reasoning layers, or simplified explanations to improve clarity. This helps users trust the system and reduces confusion in sensitive applications like healthcare or finance. Developers also implement open documentation and model disclosure practices. By improving transparency, ethical AI chatbots allow users to feel more in control of their interactions. This openness not only improves user trust but also helps organizations comply with regulatory standards for responsible AI deployment in modern digital ecosystems.

Chatbot Analytics Evolved from Basic Metrics

Modern chatbot systems now use advanced analytics beyond simple performance tracking. Instead of only measuring response time or user clicks, ethical AI chatbots leverage deep behavioral insights to improve interaction quality. These analytics help identify bias, misunderstandings, and user dissatisfaction. With ethical AI chatbots, data is analyzed responsibly to avoid privacy violations while improving system performance. Businesses use these insights to refine conversational flows and enhance user satisfaction. Additionally, predictive analytics help anticipate user needs more accurately. The evolution of chatbot analytics ensures that ethical AI chatbots continue to improve while maintaining fairness and transparency. This balance between data-driven content and ethical responsibility is key to building trustworthy AI systems in competitive digital environments.

Learn more: Chatbot Analytics Evolved from Basic Metrics

AI Chatbots for Accessibility

Read more about this topic: AI Chatbots for Accessibility

Chatbots and Data Privacy Protection

Governance and Ethical Frameworks in AI Chatbot Systems

Governance plays a crucial role in ensuring responsible AI development and deployment. Organizations implement structured policies to guide how AI chatbots are trained, tested, and monitored. These frameworks ensure accountability, fairness, and transparency throughout the chatbot lifecycle. Strong governance helps reduce risks such as bias, misinformation, and data misuse. It also ensures compliance with global regulations and ethical standards. Companies adopting responsible AI practices build stronger trust with users and stakeholders. A well-defined governance model supports continuous improvement and ethical decision-making in AI systems. By integrating structured oversight, organizations can ensure chatbots remain safe, reliable, and aligned with human values while delivering consistent performance across all digital interactions.

Key Governance Principles

- Accountability in AI decision-making

- Regular bias and fairness audits

- Transparent data usage policies

- Compliance with privacy regulations

- Human oversight in AI training

| Framework Type | Focus Area | Key Benefit |

|---|---|---|

| Internal Policies | Company-level rules | Faster implementation control |

| Industry Standards | Shared best practices | Improved consistency and trust |

| Government Regulations | Legal compliance | Strong data protection assurance |

Reducing Bias in AI Models

Industry Applications of Ethical AI Chatbots

Ethical AI chatbots are widely used across industries such as healthcare, education, banking, and e-commerce. In healthcare, they provide safe and reliable patient support without misinterpretation. In education, ethical AI chatbots assist students with unbiased learning resources. Banking systems use them for secure customer interactions and fraud prevention. E-commerce platforms rely on ethical AI chatbots to deliver fair product recommendations. Across industries, transparency and accountability remain key priorities. Businesses adopt ethical AI chatbots to enhance customer trust and meet regulatory requirements. These applications demonstrate how responsible AI can improve efficiency while maintaining ethical standards. As adoption grows, the importance of ethical AI chatbots continues to increase globally.

Future of Ethical AI Chatbots

The future of ethical AI chatbots is focused on deeper personalization, stronger governance, and improved human-AI collaboration. Emerging technologies will make ethical AI chatbots more context-aware while maintaining fairness and transparency. Future systems will likely include stronger explainability features and real-time bias correction. Organizations will also adopt stricter ethical frameworks to guide AI development. As global regulations evolve, ethical AI chatbots will become a standard requirement for digital platforms. Advances in machine learning will further improve accuracy while preserving ethical integrity. The future will also see increased user control over AI interactions. Overall, ethical AI chatbots will play a crucial role in shaping a responsible and trustworthy AI ecosystem.

Conclusion

In conclusion, ethical AI chatbots play a vital role in shaping a fair, transparent, and responsible digital future. They help ensure unbiased interactions, protect user privacy, and promote accountability in automated communication systems. As AI continues to evolve, maintaining ethical standards becomes essential for building trust and long-term user satisfaction. Ethical AI chatbots bridge the gap between technology and human values by offering more inclusive and explainable responses. With ongoing improvements and human oversight, these systems will continue to support safer, smarter, and more ethical digital experiences across industries and global platforms.

Frequently Asked Questions

1: What makes AI chatbots ethical?

Ethical AI chatbots are designed with fairness, transparency, and accountability in mind. They use balanced datasets, bias detection methods, and clear response logic to ensure users receive accurate and respectful answers without discrimination or hidden manipulation in interactions.

2: How do chatbots ensure fairness in responses?

Fairness is achieved through diverse training data, continuous monitoring, and bias testing. These systems are regularly updated to prevent discriminatory outputs and ensure equal treatment of users across different backgrounds, languages, and demographic groups during conversations.

3: Why is transparency important in AI systems?

Transparency helps users understand how decisions are made by AI systems. It builds trust, reduces confusion, and ensures accountability. Clear explanations, documentation, and response traceability allow users to feel more confident when interacting with AI-powered tools.

4: How is user data protected in chatbot systems?

User data is protected through encryption, anonymization, and strict access controls. Only necessary information is collected, and privacy policies are clearly communicated. Regular audits ensure data security and compliance with global privacy standards in digital communication platforms.

5: Can AI chatbots reduce bias completely?

While bias cannot be eliminated entirely, it can be significantly reduced. Developers use diverse datasets, fairness checks, and human review systems to minimize unfair outputs and continuously improve chatbot performance across different user interactions and real-world scenarios.

6: Where are AI chatbots commonly used today?

AI chatbots are widely used in healthcare, education, banking, and customer service. They assist users with inquiries, automate support tasks, and provide real-time responses, improving efficiency while ensuring safe, structured, and consistent communication across industries.

7: How do chatbots improve accessibility?

Chatbots improve accessibility by offering voice support, multilingual communication, and simplified responses. They help users with disabilities or language barriers access digital services more easily, ensuring inclusive interaction and equal opportunities in online environments and platforms.

8: What is chatbot analytics used for?

Chatbot analytics helps track performance, user satisfaction, and conversation quality. Advanced analytics identify issues, improve responses, and enhance user experience. It goes beyond basic metrics to provide insights that improve system intelligence and conversational effectiveness over time.

9: Are AI chatbots safe for sensitive information?

Yes, they are safe when properly designed with security protocols. Data encryption, limited storage, and strict privacy rules help protect sensitive information. However, users should avoid sharing highly confidential details unless the system guarantees compliance with standards.

10: What is the future of AI chatbots?

The future involves smarter, more transparent, and context-aware systems. They will improve personalization while maintaining ethical standards. Enhanced governance, real-time bias correction, and stronger privacy protections will shape next-generation conversational AI technologies across industries.

AI Chatbots in Supply Chain Management: Boost Efficiency

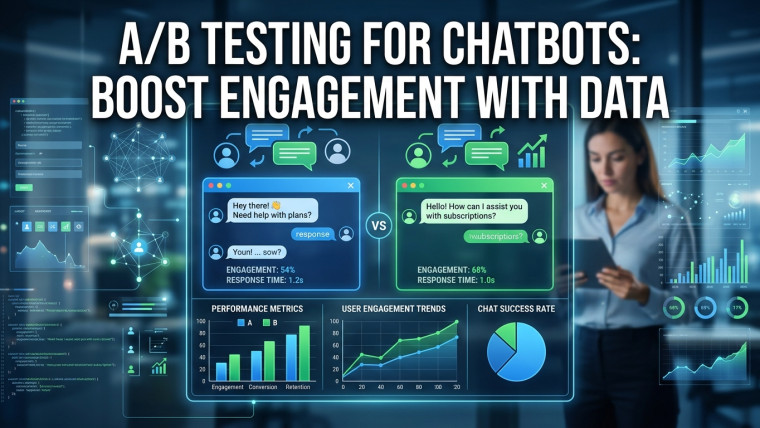

A/B Testing for Chatbots: Boost Engagement with Data

Smart Chatbots Revolutionizing Supply Chain Management

Supply Chain Chatbots: Streamlining Logistics with AI